00:07

Hello and welcome to pure Accelerate 2022. My name is Bolland Sha, and as part of this Deep Tracks session will be talking about one. Does a day in the life of a Port works admin look like I'm a senior technical marketing manager with the cloud made of Business unit or the Port Works business unit at Pure Storage, and you can find me on social media at those handles. Lister.

00:30

So feel free to reach out to me if you have any questions relating to the content that we have planned for today or regarding any other Port works related questions as well in this presentation. Real specifically going to focus on water port works Admin da's from a day to day basis. Right post a few at this conference come from that storage background, and you know how difficult are easy It is to provisions,

00:55

storage, folio watches, machines and for your developers who need access to storage. We put on our develops hats and then figure out and walk you through the different things that are part of the day for a port works admin and how using port works can help you make things a bit easier. So as part of the agenda, we have things like bills get started with the basics. So how easy listed Deploy Port works on an

01:24

existing humanities classes because that's step one, right? Get any of the men benefits that put works brings to the table. You need to know how to deploy. Then we talk about the next at the chase Dynamic volume provisioning. So you have put walks up and running. How can you enable your developers to

01:41

provisions storage for their state, full containerised applications on demand on a dynamic way? Then next we talk about capacity management because again, once you have storage, the next thing is to keep track of the utilisation. And how do you expand your persistent volume? Surged idea Applications always have the storage at the near without going off line.

02:03

Next step in the cycle is talking about data protection in disaster recovery and how things are like. We'll talk about why and housing that different when it comes to containers in communities from those traditional applications and how port works can help you build those data protection. Disaster recovery solutions for your containerised applications and their next step is talking about database as a service like

02:28

Okay, once you have done all of these previous things, the next step in the evolutionists offering things as a service, making it easier for your developers to consume databases or data services. Removing that manual element for these deployment and how you can just consume as a A service stocks available to you and deploy any database instance on your communities cluster that you're using for development or running a

02:53

production work looks so with that, Baseline said. Let's get started. Let's start by talking about how to deploy foot works. So Port works today is put words enterprise specifically again. The portfolio consists of Port Works Enterprise. That's our suffered find stone Age or cloud. Need a storage solution that can run on any

03:14

communities cluster of choice. And we have p X pack up, which is the data protection tool that we look at later at the presentation, and then we have Cold Works Data services, which is that miss as a service offering that's available to all of our customers. So let's specifically talk about portals enterprise and how easy it is to deploy it in in on top of any communities cluster.

03:38

So today port work supports all and any community still astral that you can provide it. So if you just want to deploy and open source vanilla communities cluster, you can very well go ahead and instal in port works on it and have port works. A provision, the back and storage or consumed existing storage that you might have attached to those communities worker notes and aggregate them into a into a port.

04:04

What storage pull that can be consumed by state ful applications. But one thing I wanted to highlight its port works has built integrations into major cloud providers where we automate the entire back in storage provisions. So let's say, for example, you're running in Amazon s right. You have your E K s cluster. Any depot next step is to instal port works.

04:27

All you need to do is use our spect generator, which is port work central and generate a configuration and specify how many E. B s volumes you want and which size I ABS and true put requirements. You have per e B s volumes and these E V s volume will automatically be provisions attached to your ex work unknowns and aggregated into a single unified storage pool that you can use to provisions block and file

04:53

persistent volumes from that footwork storage. We have the same automation capabilities build into who will cloud platforms. If you're using Google back in pedis, you can new sport works and boardwalks will automate the provisioning of of all the back and stored is needed. Same thing with Microsoft Azure. Same thing with the MMD steer.

05:13

The MBA Tan Xue Port Works has built these integrations to make sure that our administrators are not spending time in provisioning the back in storage by spending more time and working with the developers and making sure you have a solution that can help you add business value to your organisation rather than spending or time on deployment and management of the back in storage.

05:35

So now that you know that Port works can help you provisions these back in and drives automatically, however, you'd actually deploy port ports, right? What are the deployment architectures so you can deploy port works in one of two ways. The first one is this aggregated the method of deployment where you have a huge communities cluster and against can be any favour of

05:58

communities, but you have a certain nodes inside their communities. Cluster that act as the storage notes so that they are the ones that have those back and storage discs attached to it and pro provide storage for any application that's running across the storage or storage listeners. So that's disaggregated. It allows you to scale of your compute capacity

06:21

of your clusters of CPO and memory without having to add more storage. This is important for work load times that might not definitely need more storage. They might more be more CPU or memory intensive, and storage might just be a small pension. So instead of having customers pay for all lords from a storage perspective, Port works can help you customise your deployment and used this biz aggregated apology.

06:48

Next, we have the hyper converged more dispel where each node in the cluster is a port work storage. Not so. This allows you to consume storage locally Israel, so you can use our open source logical stark storage orchestrator for communities which which is built in Newport Works enterprise and ensure data locality. So whenever a new community spot gets deployed,

07:12

gets pan Arab communities, works with Stark two provisions. The board on another, which already has a replica of the persistent volume available for support to use. This ensures that the order Ayo traffic is not going across the wild. The you can get better performance by just running the was the storage locally on the communities working towards where your

07:34

community spot is deployed. So that's the Khyber converged mode of deployment. And based on your use case, you can choose one or the other. Our experiment with it and find out which one works best for the workloads and applications that you have running on the Affair Communities Cluster Next decided where you are running it decided how we are going to deploy

07:55

port works. But then, like an inward topology, the next up is how you can actually deploy port works. The easiest way to do it is using our use Y based footwork. Central Don't. It's a simple viser where you can just follow four or five different screens. Select how you want to deploy portals using the

08:15

operator, which is the default way now versus demon, said. Have you what version of football. So I wanna run. What's the back in cloud or what's the communities distribution, right? Is it on? Is it one of the public cloud managed commodities distributions?

08:31

And then what does your back in storage ship likes? If it's E. K s, you can select the type of EBS volumes you want over from Iowa on GP to G P three and then specified all those I ops and bandwidth requirements as well. You can configure in your networking settings, or you can leave them as defaults. And then,

08:49

just at the end of that wizard, you will get to Yamil Files that you can just copy against your communities. Cluster and Port Works will automate the deployment of everything. For UN present, a unified footwork storage could, In addition, do the based method that are, if you are using, get ops. Work flows as part of your communities

09:10

deployment as part of your application life cycle. You can definitely use court works as part of your adopts pipelines as well, so you can have you can use things like flax seed, Serie too, have a specific section for port works. So as part of your cluster deployment your communities cluster deployment.

09:28

One of the things that can get added is the deployment for port works as well. So you don't need to do anything manually if developers around the using Get ops taken at Port Works has just an additional stat that needs to be taken when deploying communities. And then the last thing is using tear a phone or similar infrastructure escort to tear a form, we have to reform models that can be leveraged bye in developers and operators to deploy

09:54

port works on any communities. Cluster of choice If you're running in, the public club will integrate with those with the cloud terror for models as well. If you're running on Prem using open source communities, we'll use cube spray under the covers and help you deploy port works in addition to your communities. Lustre. So there are different options available for

10:15

you, which makes life easier. I like your own have to worry about manually provisioning C s. I drivers. Once you have your community slasher running as go personally gone through the deployment of F S based C s I drivers and have to lay, you may have to start with creating any offence file system creating those mount points or mount targets configuring the necessary I am rolls

10:38

attaching those I'm rose to your E K s cluster and then configuring the CSC. The C s a f c aside, driver on Yuri case clusters and then creating storage pools are they used? The exact five system idea was on figured on the back. And so, as you can see, it's a tedious, multistep process. And if you're doing this at scale,

10:57

if you're doing it across tens or hundreds of communities clusters, this can definitely slow you down. So worse is all of that manual work to sport works and make sure, like all of those things have been simplified for you and automated for you so you can have port works up and running as soon as you have a community is cluster.

11:16

So let's see. Now we have deployed our communities. Last report boxes up in running. What's the next? The next step is talking about dynamic volume provisioning. I know when port works are man, community start, great state Full journey. Things were not as easy or as dynamically provisions.

11:32

People had to configure the back in persistent volumes manually and then configured. Persistent volume claims insider applications to connect O's and use that persistent storage. But with communities there is a new, and that is a construct community storage class, which allows administrators to define different classes of storage to your developers so that they can automatically provisions or dynamically provisions.

11:59

Breed right ones on block and read. Write many of file volumes amazed on your if you're back in storage system supports those protocols or not. So clearly have an example of a simple community storage class where we have selected port works as the provision er of choice, and it said everything to the defaults. So if you're not using port was, you still need to use community storage classes and you can

12:25

provide things like provisions. Er, the reclaimed policy like what happens once the persistent volume claim is the leader. The volume binding more whether he wanted to be immediate or wait for the first consumer and then allow volume expansion whether you want a run nine persistent walling to be expanded in real time or not.

12:44

So all of these things can be specified, and a community storage class definition and can be made available to year developers. So from a developer perspective, all you need to do is in your application. Yamil file with the very you need persistent storage. Just create a PVC object and specified the storage class that he want to use for that persistent volume.

13:06

All the different parameters that are configured in the back and storage class automatically get inherited for your persistent volume claim, and you get all of those settings on the back and say, you know, no longer do developers need to open up tickets to configure the back in London's. Make sure those loans are mounted to the right B M air clusters,

13:26

right virtual machines, if you using something like road device mapping and then a highlighted or translated properly in proper assistant. All of that process will be automated for new using port books, so food was provides a next level of features when it comes to storage class definition. If you are, let's say you're not using port worlds.

13:49

If we are just using a basic C S. I drivers from one of the other vendors in the ecosystem that has a traditional storage offering, right, the capabilities that you can get inside your community. Storage Plus would depend on the physical array that you have backing the cluster, which is not the case when it comes Report works.

14:06

You can get additional benefits of features of parameters from port works, regardless of the underlying infrastructure of choice. So using import work storage classes you can create custom storage classes for individual applications are individually developers based on their needs. And these additional parameters can include things like the type of file system that you

14:28

need to be laid out so e X affairs or 64. You can specify the replication factor or the replica count for your persistent volume, so you can. If it's a just a estate application, you can set something as one apple factor has one, and it will only store one persistent volume. But if it's more of that production level

14:47

workload where you need that three replica spread across your community, Spencer's even if a note goes down, you still have access to all of your data, and your application can keep running so you can define this. These parameters the application factor as part of the storage class definition If you want a shared volume writer? Read. Write many. William.

15:07

We can use our shared before service to get us a loan balance. Rain point for the back in rewrite many one you can specify. I owe crore priority and I a profile. Sigh of priorities comes into the picture when you have multiple storage back ins, and some of them are running hard drive. Some are running assessed ease and some are running and B m E bay storage.

15:28

So based on the class of storeys that you have on the back and you can set different, I owe priorities. I a profile is another interesting factor. That parameter that Port Works provides since running since Port Works understands how different databases actually right data disc, We can optimise the performance of individual databases by setting different. I owe profiles and again we have different

15:53

profiles to choose from. But if you are not sure you can just leave it to auto. We will start monitoring the type of aye oh, that's return to the desk and then optimised in automatically set a different I owe profile that better suits your application on your database of chance. Additional parameters include placement strategies where you can set affinity and anti

16:14

affinity rules for your persistent volumes and your replicas. You can select whether you want encryption addressed for those persistent volumes and not by setting the secure flack. You can also set scare snapshot schedules rights of A by defiled. As part of the storage class definition, you can specify whether you want hourly snapshots for your persistent volume weekly

16:35

monthly snapshots for your persistent volumes and where you want to store those snapshots. So, as part of a persistent volume deployment, your developers don't have to worry about confidently configuring these natural schedule on day zero day, too. They are automatically configured once you have specified these para Meadors as part of it storage class definition.

16:58

So, lads, the ease of use that quote wall springs to the table, where you don't have to manually added each persistent volume. For these different parameters, you can set and create different customs storage classes which can be leveraged. Buy a developers to automatically provisions these persistent volumes with these specific parameters that you have said as the football

17:22

excitement. Next, let's talk about capacity management right, and this is really important because you might have hundreds or thousands and even more persistent volumes running as part of your different applications on one or multiple communities cluster. And if you are doing everything manually, you might have to use monitoring solutions to monitor the storage utilisation for each

17:46

persistent volume that you have running and then manually edit or expanders persistent volumes when they are running low on capacity to ensure that their applications are going off line. And this can get tricky, like if it happens in the middle of the night, very persistent volume is reaching. Capacity are reaching 100% capacity. You might have to wake up expand those volumes

18:07

manually. This is this sounds painful, and we have had customers. We have gone through this trouble, but with Port works with Port Works autopilot, Wendy's customer switched to port works. They were able to leverage something like put works autopilot to set. If this, then that rules as file called autopilot rules and have it running against

18:30

your communities. Cluster running port works where port works will monitor for those conditions, and whenever those conditions are met or triggered, we can perform certain actions automatically on your behalf. So let's take a simple example that you have 100 gig volume. And he said, the rule that if your 1/100 volume exceeds 70 gig of users,

18:51

so it's more than 70%. Just add another 50 gigs to my volumes of the 100 gig. Volume automatically becomes 150 gig value once the utilisation has crossed 17. It's a simple if decision that rule that you can specify. An I am l format execute against the port works. Flusher and boardwalks will monitor all the

19:12

different persistent volumes against different rules that you have said. So you can. It's not just one rule that you can specify against one cluster. You can have different label match different rules that can match with different labels for their persistent volumes and treat your post crest persistent volume different from your

19:29

Cassandra persistent volume. But over of this process, all of the persistent volume expansion operations can be all automated for you using port works. And then the next logical question to this that we get every time is okay, Ward. If my persistent volumes are continue to keep

19:47

expanding and I am running out of storage capacity on my boat. Well, storage good against you can have a rule in place for that as well. Where you can settle a. Specify a rule where be will automatically add more storage or more Back and storage to the port was storage pool, so your applications and you're persistent. Volume's always have the storage they need from

20:09

the underlying Portwood storage food, so this means we automatically provisions more EBS volumes. It's decision Amazon or more v MDK files. This is running on prime on B m a tan xue and expand your port work storage pool so you have more storage for your persistent volumes. And in addition, look both of these. We can also re balance your volumes across

20:29

different notes to ensure that it's on just one, nor that pegged for storage resource is and other northern not consuming. I have too many resources of you can have rules in place to balance out your storage utilisation as well. In addition to like the these If this and that, you can also specify max limits that you known want a denial of service attack or something like that that can write more and more data to

20:54

your persistent volume and exploit the the automation that you have in place. Right? You can set those Parliament thresholds or year max storage as part of each rule. So let's say let's go back to 100 big persistent volume. Example, right. You can have an upper limit where it will stop adding 50% more capacity to your persistent

21:14

volume. Once you reach 500 kids of total capacity, so it will. It will go from 100 to 1 51 50 to 2 25. And keep doing that until it reaches a capacity of 500 gigs. And then, at 1.4 totes won't automatically expand the storage unless you come back to your cluster. Added that Mac storage capacity and then pour

21:36

talks will take over again. That's all great, but Jax, an automated perspectives. But what about if you wanted to include or two pilot in Yorg adopts work flows right where you wanted to have a validation cheque before court works can automatically expand those persistent volumes or the storage schools for ing.

21:56

This is where a portal sort of pilot has that get ops based capacity management mind set a spell where we will monitor and measure and predict deals utilisation. But before acting on that condition or acting on the rule will made for the approval step. What does it look like? It looks like whenever rule gets triggered, we will generate a P R request in indogate debris.

22:18

Pop your choice and only once you accept that PR well, port works. Act on that rule and expand your wall. You mar expand your underlined put work storage cluster So this health and that additional layer of approval when it comes to your goddam space work flows. So let's take a look at what a not a pilot rule looks like in the Yamil form.

22:41

So here you can see view, have selectors where you can specify the labels that you're persistent volumes have, so you can have different rules for different persistent volumes of different applications. And port works will match these labels so you can have a rule for post rates. You can have a rule for Cassandra, and you can specify different threshold limits for them.

23:00

You have names, place electors. So in addition to the volume labels, if you have names spaces that run multiple applications of multiple types of databases. You can have different names, space electors as well, In addition to the labor's next agent, valued defined. The conditions like this is where you deter mined the threshold criteria. So you can specify in the in the Yamil file of

23:22

Bihar if the condition is if the persistent volume use it goes about 50%. So you can use that to monitor your storage utilisation and then specify actions. Actions is the the action that you want to take. So let's say here, instead of 50% I want to increase my volume size by 100%. So the 100 being volume when it crosses 70 gigs automatically becomes 200 gigs inside,

23:48

so you can specify that was scaled percentage as part of the actual section and then in the final one is enforcement. So if you want to use the get ops based approval workflow or cube, CDL based approval work for workflow, you can to specify enforcement set to troop and port. What's warned Actually act on any of these when these conditions that regard before or

24:12

unless the administrator has approved that action. So that's how we the easy it is to use port. What's autopilot in your community structures to automate the storage capacity management. And again, just imagine how you have been doing this manually for washing machines or for those cloud volumes and manually mon, monitoring the individual volumes and expanding those as and

24:34

when needed. All of that process can be automated and using. Put what sort of pilot rules on drop off your communities cluster next. Bihar's data protection and daily production is really important because our traditional tools don't really work with communities and will talk about that. He sins why they don't in the next life. But just by using containers of communities

24:57

doesn't mean that you, your data protection or disaster recovery requirements have. Conaway. You still have those recovery point objectives and recovery time objectors for your different applications is still still need to work with your application. Owners decide whether the applications that you are in discussion are Tier one applications mission critical counter for any indeed a lost

25:18

our they are here to Maybe they can do a couple of hours of our Pio and then, if you maybe 30 minutes of cardio and whenever there is, it is a statement 40 or three application which might be tested eight hours of NATO losses acceptable. Also, you still have to go through all of those things, but you need to use modern data protection tools because traditional back of

25:40

tools are meant to work with communities. Similar Lou. How we went through the transition of moving from a physical server Bare metal based data protection solution to a virtual isation solution where you talk to your be centre peas. Get a list of all the PM's and protect those VMS instead of those yes,

25:58

excise hosts. The same logic applies when you're moving from vocalisation to communities as well, where you need to talk to your communities server and get a list of all the different containers and applications that are running in different communities. Name spaces and protect those as a unit rather than just protecting the back in. Persistent volumes are back in storage and then

26:21

are when a disaster strikes are venue. Need to restore this application. Figuring out how to deploy those salmon finds you need a modern data protection solution that can help you a back of your entire application as a whole unit and then restore it to the same communities cluster or a completely different communities fluster as well. So let's see one such tool and action. So as part of the Port Works portfolio,

26:47

we have P X, back of which is one of our data protection solution that's available to you. It is built for communities, and four containers runs on top of communities itself so you can have it co located as part of an application cluster or running as part of a dedicated back of cluster spell. Or, if you don't want to be responsible for managing your data protection to you,

27:10

can also consume R P X back up as a service solution, which is a sass based control claimed that you can use in, disconnect their communities clusters and start backing up your applications that are running on top of this community slasher with the age backer, you can create those back up and rears back of jobs in single click. So you just select the cluster the name space and select what schedule you want to use for

27:34

backing it up and very want restored those back of snapshots all of that. And then you just hit a single flick and it gives you back up snapshot. And it takes a mac of snapshots and stores that of in an an s three compatible back of repository. P X back of also allows you to migrate your applications anywhere so you can be running on we, Amir Tan Xue.

27:54

But then, if you are on prime side coast down, you want to restore something to the public cloud on Amazon s E X. Back up a lousy do. Take that application that used to run on and do, but then move it to an Amazon E K s cluster this well, but you can go multi clouds from G to each year from Ikea. As to a case, all of those different combinations are supported using the ex back up.

28:17

PX backup also has roll maced access control and can help you comply with the 321 back up rules so you can have three copies of your data store across to different media and in one of those poppies is in a remote site so you can have an ENTRE backup repository and store a back of stamps starts in Nablus has three, which is running in an aid of lira state, a centre and then, with the adoption of community, is on the rise.

28:45

Even ransomware attacks are getting more prevalent. People are going after community slashes because they usually have a larger attack, surface or attack vector and encrypting your data. So using data protection rules that have support for object logs or object attention on the back in storage site, you can get protection that additional level of protection

29:09

from your ransomware attacks. Because either if you get hit by, you don't have to worry about your backup snapshots being mess bill. Because those those are in an an object locked object storage pocket so you can easily create our spinner Manu community slasher and restore for from those previous snapshots which you know haven't been impacted from Ransomware. So you don't have to worry about making those

29:34

kinds of their payments and figure, hoping that the hacker gives you, gives you the keys to AnAnd, decrypt your data and kept back up and run. You can use a later protection, too, and be ready for such attacks. So with the ex back up, you can have your containerised applications running across different north on your communities, lustre on them or in the public cloud and using the ex

29:57

back up, you can back it up and restore to a completely different cloud environment or completely different on front environment. One of the key features that we launched over the past year is removing the dependency on Port Works enterprise as the storage layers. And now, if you are using any C s eyes backed storage adds that has support for snapshots.

30:17

The ex backup can work with that storage back and it glows application snapshots and help you move back of those applications and move it to a different cloud. Solution. Addition, community solution. If Nick need and so that's a be running on eight of us, right? Euro need to instal port works enterprise.

30:34

You can just k continue using your E B s and E F s back storage auctions using docs I drivers and protect those applications and store it and and street depository same with Google Cloud. Same with the azure. Same will be MBA times who now they Now that the the MSC aside Driver has support for volume stamp shirts so all of those things are supported using the ex bank of and provide an application aware data protection.

30:59

Likely make sure that these snapshots, our application consistent and not just crashed consistent because with the distributed nature of applications and the distributed nature of the databases that you're using, you need to ensure that you are taking a snapshot at the right moment and flushing everything to disc before taking. That's not right, so Lee can be ex. Pack up allows me to create those three and

31:20

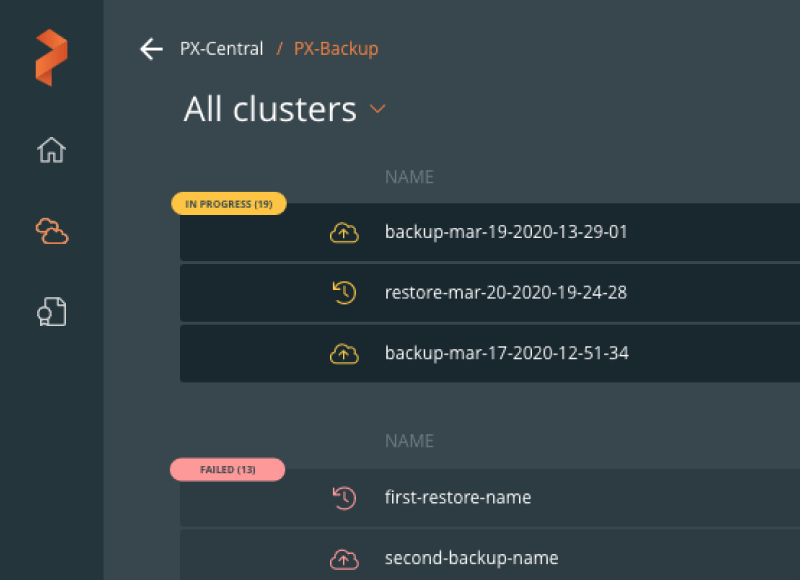

post back of rules where you can send those the those commands that you want to execute, where we write everything to data from memory and then take a snapshot on all your different parts at the same time, seeking new speaks back up to make sure your snapshots of application consistent Thanks, we have a couple of screen charts to June. Just highlight the van B X Pack up brings to the table and how easy backup and recovery for

31:44

communities is using the ex bank up. So on the left, you see as a screenshot of how easy it is to take a backup job. Our backup snapshots you just give it a name, he select the back of Target. It can be any yesterday compatible. Back up repository. It can be a mess industry as your block storage,

32:01

Google Cloud of Jake Storage pocket or foreign cram. It can be pure flash played systems as well. You can select whether you want to back up the application based on a specific policy. So an hourly weekly monthly periodic policy that you, as the administrator, can set and near. Developers can basically use P X pack up on a

32:21

self service basis, and leverage knows back up targets, and you're back up scheduled policies X. You can also select whether you want to specified US pre and post back of rules to ensure that your snapshots and application consistent and not just clash consistent. Once you have considerable of this, just hit that create button and you'll have an application consistent back up that's being

32:42

stored in that three repository for you. Now let's say you have to restore from a backup snapshots. All you need to do is navigate to be ex pack up, find this the upside that you want to restore from, and then initiate the restore process, right? So in this case, you can give it the restore job, a name you can select a destination

33:00

clusters. It can be the same cluster. Very or original application was running or it can be a completely new community slashed others running on prime or in the public club. You can do a default restore, or you can customise your restore as well so you can select the completely different storage back. Let's say you are running on geeky and you want

33:16

to move your application to the chest. You can use P X back up as that told to probe. Approve help with that migrations, you can change the back and storage for your containerised applications. You can change the name space if you want for your of an investor. Application should be restored to on the destination cluster, and then you can select whether you want to restore.

33:36

All our cheques are just a few against. If you are moving across clouds, ready will have to restore everything to make sure it application comes online. But if this is restoring to the same cluster, he might have accidentally the leader. The persistent volume you can just select a specific, persistent volume and restore your applications from that snapshot rather than having to restore the whole set.

33:55

So that's how easy it is to back up and restore applications when using the ex packer. But that's great. Good enough. But what about disaster recovery? So this is that next level where if you are using containers and communities, you don't have a solution that can help you protect your applications and build that synchronous or asynchronous disaster recovery solutions.

34:16

Port Works, p x d r. We have helped customers build E R solutions for their containers and for communities clusters, which has allowed them to promote applications to production. We have had We have financial services customers that use the exterior to run mission critical applications on human bodies. Introduction. Knowing for a fact that Port Works is handling

34:38

the storage, an application and my application application in such a way that you kn if my primary cluster goes down, I still have access to all of my data with zero data loss, and I can't bring the applications online really quickly. So without port once this gets really tricky because you might end up using a backup tools which might not have a destination, just a that's ready to go and and might not have that.

35:01

Zero r p o r can't deliver that. Zero r p of on your mission critical applications at the time of disaster, and you'll have to spin up a new communities. Fluster configure on your networking connexions and then performed that restore operations, hoping that the disaster recovery meant works of instead of worrying about all of that during a disaster. 11. The simpler option is to just use port votes p

35:25

x t R, and activate or use a single command, which is start serial. Activate migrations to restore near applications quickly on the secondary cluster that's already up and running of bringing that recovery time objective to a just a few seconds. Two minutes for further the governor operation. So let's talk about a few constructs. When we're talking about disaster,

35:46

the or put work speaks to the first thing you'd al you need to know is a cluster pair object. A cluster pair object is the trust object that between your primary and your secondary clusters that helps the that helps community is basically migrate everything that's running on primary in a specific name, space two year secondary cluster and helps with that migration successfully once you have the cluster pair object created next is to create a schedule

36:11

policy, object and against schedule. Policies are important because they help you map your migration jobs to your application specific place. So if you are using the synchronous disaster recovery solutions, your leader is always getting replicated. So even before you're right, has acknowledged packed in the system. Port Works will copy over the later to the

36:34

secondary cluster and make sure a copy exists at the secondary side before the right is acknowledged. But you still need a scheduled policy to copy all of your communities objects, which don't change that frequently if we are being honest so every 10 minutes and the 15 minutes every hour you can copy or deployments and service objects from your source lustre to your destination or a from a primary two year

36:55

secondary cluster. And then, to put all of this together, you need to have a migrations. Can you'll object? Migration schedule Object basically links your cluster pair object. You're scheduled policy and to a specific name space so you can have a migration schedule for individual applications and have different schedule policies associated with them.

37:17

And then you can specify addition, the para metres like include Resource is, which is secretary, which, because we want port works to copy not just the persistent volume, but also all the communities. Objects at a running on talk are you need to specified things like start applications as Paul's because since this is an ongoing migration at that specific intervals, you don't want your application to come on line of the

37:37

secondary side while the prime minister it is still active. Include volumes this can change is a fear using synchronous disaster recovery solution. It's one single stretched footwork fluster and will handle the migration at the storage level. Seek unspecified at us false. But if you are using in a sing D R solution, you need to specify that parameter is true because he would do want,

37:58

as part of each migration objects, persistent volumes or the game blocks that have changed to be migrated from your primary to the secondly cluster. So this is how everything comes together. You can have a migration schedule, bar name, space per application in near primary Cluster and move your applications to our copy or applications to the secondary cluster made from these migrations cable objects so many are

38:20

using a synchronous disaster recovery solution. As we said, it's a single stretched port works cluster between two community Slusher. One key requirement is you need to ensure that is that your source and destination of primary and secondary cluster can meet the 10 milli second round laden see requirement and which allows court was to create that stretch cluster with an external external CD key value Really

38:44

restore as the quorum side. And whenever you have such a deployment, Port Works can will basically copy any later. That's written on the primary side to the secondary side. And then you can have migration schedules in place to move your communities. Objects from him. Primary. Get the security side.

39:00

So one of our customers, Royal Bank of Canada, actually used uses port Works, P x t R, in production for their mission. Critical financial applications, where they absolutely can't afford to have any sort of data loss and have to migrate. Have to be ready for the scenario that if their primary question goes down. They bring up their applications quickly on their secondary cluster without any data loss.

39:25

So that sink minister, it's Astra Ecology Extra have asynchronous disaster recovery solution as well. So we want a bill that geos, a wide, geographically distinct community slashers, maybe have a cluster in US East one. Have your secondary cluster in us, Westell. So if there are any region failure site, we have seen us East one go down a couple of times over the last year.

39:45

If that scenario happens, you can still bring up your applications on your second cluster, maybe running in a different lead earlier season as quickly. Irresponsible. So in this case, where poured words will follow the migration schedule object that you have configured, follow the schedule policies to meet the application and copy or persistent volumes the changed blocks and your communities

40:07

objects at that fess ified interval from your primary with the secondary side, so that this is a decision area when you won't have zero r p o. You're our PO will match the schedule policy that you have set as part of the the configuration and Brandon Dory in the recovery process is the same. All I need to do is long India. Secondly, cluster execute a single command,

40:28

a start city inactivate migrations further names race and will bring up all the different resource is falling again. We have, like specific demos for all of these different prez. Our Biz Astor Recovery Solutions on our YouTube channel. If you want to see this in action next now, that young done leading idea of instal port works years enabled your developers to

40:50

dynamically television storage. You have automated the capacity management your major. We have data protection in disaster recovery solutions in placed. What's next for us? The port work, Sandman A. What's next is delivering date amazes as the service or making sure your developers don't have to learn how different operators work for

41:08

post. Rest our case, Sandra or reddest or or manga D B and how you can run the host like deployed us and then managed to also have a Can you buy back up mint perform Ackerman. Recovery furthers individual custom the sources that those operators have deployed. So this is part of a huge set of challenges that our database arguments face where the day they still need to think about scaling,

41:31

and some of these operations are. It's killing it manually. So if you are not using a manage service in the cloud, if you're running it manually on two instances, might have to manually provisions 82 instances added to your post rest lustre Raza read replica. Make sure the application happens. Performed those manual Shaar ING operations. All of that becomes very tedious are you need a

41:50

solution that can help you scale easily and add more ports and more storage as quickly as you wanted next days. Operational complexity. So there is that management overhead, right? Like if you have decided on using data bases on communities, you might have to figure out what operator to use for that deployment and how to manage those and you need. Usually you need specific or Assamese or

42:13

individual estimates for individual type of database offerings. We might have our the an S M E. That works with relational databases, one with with non relation later basis, document databases and no steeple leader basis, and so on and so forth. All of this operation of complexity does take away time from innovation and and and keeps those assemblies just focused on the back

42:36

end infrastructure It. Also running these manually also gives you less time to work with your product engineering and help them mill better products. It basically reduces on that time because you are, too. For based on the underlying infrastructure. There is another interesting challenges. Bill versus by organisations are going through

42:57

this modernisation journey. If it's just for a small team or if it's for the entire organisation, there is that bill. Verses by were, for a cloud, Need a solution, whether you want to build in that expertise or user inhouse expertise to build a custom database of the service pack for your customers or une want to offload that to a vendor of your choice that supports all of these different database

43:19

services on and helps you with Day zero and Day two operations for individual databases or data services and then a deployment bank right? Like if you're not running on communities, you might still be running these on washing machines and with washing machines that comes with the whole set of things. You have to provisions IBM from your lease wearing restore.

43:38

You make sure you have been of capacity in the back in London and then, once your virtual machines done, installed the guest over his, maybe you have a gold and template for it, but you still need to like provisions. The database instance on the washing machines or a lot of time goes into just deploying individual later bases, and it's not self service at all.

43:55

You need to reduce that and user managed service that can provide ourselves of a solution for your developers, where nobody spending time based on a specific Y or an calling back in later basis can be Prohibition and Connexion strings can be provided to your developers. So this is where Port Works Data Services. PDS comes into the picture where Port Works provides that single,

44:18

unified SAT waste control plane where we have a catalogue of different databases. Are data services available for you? All you need to do as administrators is idea Communities cluster and then have you develop a spin on these individual databases or data services on on different communities justice that they have access to again? There are sessions around PDS at this, a pure accelerate if you want to lawn more,

44:42

and maybe even get some hands on time or look at a demo of periods and action. But just to show you a glimpse, right, so this is how easy it is. You will have access to a user interface Value can specify things like the name of your database, the Target communities cluster and the name space to. This can be an Amazon, I guess. Cluster A.

45:02

Microsoft. A case Last er ran I'd open ship Cluster of vanilla Communities Open source cluster. Any community slash trophy a choice can be selected as target once it's added to the PS control plane. Select the name Space. Select data may specific application consideration templates. There are a few that we offer By default.

45:19

Administrators can create their own templates as well. If needed, you select the T shirt size for their database deployments of Test EV. You can select a tiny or a small for production. You can select something that's on more on the larger resource site you can select the number of north is the type of stone, and you want to conficker on the back and whether you want to enable back up as part of

45:38

the database deployment or not and where we want to store those back of jobs at it once the first five hour of these, which is basically selecting things from a trauma on menu when they will deploy PDS control plane will talk to your communities cluster and provisional those databases for you and then that zero and then as day two operations, it will help you perform scale operations. If you want to scale up,

46:01

add more of may change the official sized scale out at more replicas, changed the back of storage capacity, create those air back jobs or add a new back of jobs to for your individual databases. Call of that can be done using the PDS can also use the PDS you to monitor your databases so will get metrics which are not just specific to your storage layer. So in addition to like getting those I obs

46:26

through ports and Leighton see graphs. You're announcing an application specific doubts the number of transactions per second, read and write. All of those things can be monitored using the PDS itself. So now that you know what goes in the life of a put works as men or a day in the life of excitement. I'm hoping you are already thinking about Maybe

46:50

Step one, Step two, step three. But when, regardless of where you are in that journey, whether you're still deploying port works in trying a hands on you are and appointment you're using intermediate capacity management using or put what sort of pilot or you're milling those disaster recovery solutions using the extra. If you want to talk about what's next,

47:08

you can get started like you can get started with port work. Smith put Works Essentials, which is a far over three world. You can get started with port works for flash arraign flash plates. If you already have a Flasher and slash late, which I'm assuming you do because you're listening to a cure. Accelerate tracks.

47:23

You Bahar put what C s for Flash Harry flash play that's included in that visit your purchase of Flash l E Clash. I have a 13 a free trial for Port Works Enterprise, which brings in all of that advanced capabilities beyond the tryout. R P expands up pool. There is a free 30 day trial for the Y tool, where you can instal and confidently expect

47:43

upon their own. Or, if you want to just use the SAS based for state Serviced Airport. What's provide Use P X back up by the service. 1st 3 30 day trial is spent and if you are already done all of this and you're looking for PDS. Just reach out to your sales rep and will get you access to the PDS portal and you can start deploying those database instances on your

48:02

community. Slashers are already have put. What's enterprise? When that I would like to thank you for your time today. This has been a great session for me to prepare for and the present. I hope you you launch some new things as part of this presentation you had fund. I wish you have a great rest of your accelerate.

48:20

Thank you so much for your time today until next time and for watching