Dismiss

Innovations

Everpure Simplifies Enterprise AI with Evergreen//One for AI and Data Stream Beta

Accelerate the transition from pilot to production with benchmark-proven performance, automated data pipelines, and a flexible consumption model.

Dismiss

June 16-18, Las Vegas

Pure Accelerate 2026

Discover how to unlock the true value of your data.

Dismiss

Innovation

A platform built for AI

Unified, automated, and ready to turn data into intelligence.

30:05 Webinar

Practical Application of Analytics, AI, and ML. Learnings from Financial Service Institutions

In this session, we will discuss how analytics, AI and ML are being used in the finance industry to drive faster insight and value in supporting investment strategies, electronic transactions workflows, and line of business customer demand.

This webinar first aired on June 14, 2023

The first 5 minute(s) of our recorded Webinars are open; however, if you are enjoying them, we’ll ask for a little information to finish watching.

Click to View Transcript

All right, good afternoon, everybody. Thanks for joining us here. Appreciate it. Uh Welcome to Pure Accelerate 2023. Uh and our topic of practical applications of analytics A I and ML learning from the financial services industry. So I am joined today by my two esteemed guests here.

I've got Greg Johnson from JP Morgan Chase and my colleague, Victor Alberto Olmedo. Gentlemen. Uh If you wanna give a quick intro and then we could dive into the fun stuff. Yeah, I just wanna say on the outset, I was promised barstools. Um So this is uh this is subpar let down, we'll get through it.

Uh My name is Greg Johnston. Um I'm the global head of electronic trading uh services for JP Morgan. Um We operate in 60 Colo globally. Uh These are uh Red Hat Lennox builds uh bare metal. Um in terms of pure standards, they're considered dark sites, there is no internet, these are private network clouds all interconnected

um with uh essentially a private network of clients and exchanges. So anywhere where there's an exchange that we want to trade with, we're connected to them with the dark fiber. Awesome. My name is Victor Alberto Medo. I am a global staff architect, uh focusing on analytics and A I and I,

I have the pleasure of having Jay and Greg here as one of our customers. Cool, cool, great. So, I mean, we're, let's dig into the topic, shall we? I mean, we've got about 30 35 minutes here to rip on some topics. We could probably spend the, the better part of the afternoon talking about if, if they let us.

But uh we'll, we'll try to keep it under wraps, right? So, um you know, A I and ML huge topics, you know, these days, um mainstream media coverage of things like chat, G BT have really turned those into household words, but I think a lot of people forget or kind of fail to realize like these technologies aren't new, they've been in the market for years and years and the financial

sector has really put them to use in extremely powerful ways, especially when you think about things like customer experience gaining market share, increasing Roy and generating new ideas. So let's, we'll, we'll dig in and then we'll go from here, right? So here we go gain market share.

So Greg 1st, 1st question is going to you, man, um you know, high level insight when you think about this topic, what are some strategic initiatives the banks undergone uh through history? And you know, if there is good, bad, ugly, any challenges that arose like how did technology help you guys through those challenges to use? The word?

The bank is a, is a big thing. We're 270,000 people. Um But you know, in terms of like myspace and uh you know, trading, um you know, our biggest problem is we have very sparse real estate and it's expensive. So we're looking for simplicity, we're looking for scale, we're looking for the ability to easily scale without like taking up

massive amounts of uh rack units. And you know, one of the biggest challenges we're seeing is the amount of data is doubling and tripling literally every single year. And you know, if you're going to run a IML and other stuff against that, you need to store that data somewhere.

Um And most of that stuff just because of the large volumes of that data, we have to store that on prem because it's not something that is cloud worthy. You know, I know we're going to get into the market data stuff a little later, so I'll stop there. So that's, that's awesome. Um And, and obviously the real estate in that

in your proximity to the exchanges is an important factor in your decision making. Um So we, we see that at a macro level that that global finance customers are really looking to gain that market share, the market share is a very important component of their business. And since it's typically very diversified, we see kind of very different approaches in the different lines of businesses.

So for your line of business, I think it's very unique because of the requirements that it has. Typically, it goes beyond what's what's available in the traditional ways to deliver technology and, and you've kind of architected to support that very, very low latency, high frequency and analytics workload. How, how have you seen that kind of unique

demand affect some of the challenge, some of your decision making in your technology choices? Well, you know, I'll say first off, I'm not a storage guy. Uh these are no and, and I don't mean that in a bad way, like storage is not my job. Storage is really just it, it's a service that we provide. Um There are larger groups within the firm that

storage is their business. Uh We're merely providing storage as a service. Um you know, to all of my end users. And you know, one of the things that, you know, we see with that pure is simple. It works, we configure it. If we need to scale, we can scale and it's really the simplicity and the robustness of it.

It works well. Well, I love that you said that it's not your core confidence that it's like you're in the, it wasn't competent. It's not my, it's not my, it's not my primary job. No. But, but in the sense of like the, the things that you're delivering to the business are those outcomes.

Uh not necessarily like what would you provide that, but the, the overall outcome that you're helped deliver for your organization. Yeah. And you know, and you know, quite honestly, the nice way that you guys deliver the product is I don't need a phd in storage to be able to manage storage. Exactly. You were mentioning stuff about,

you know, uh proximity and data center locations. Um Have you guys been able to get creative with maybe, you know, repurposing rack space or, you know, not having to take adjacent cages because you're able to shrink the footprint down a bit and reutilize some of that. Yeah. No, absolutely.

Uh You know, that's something that we're seeing right now. We have uh flash blade, we're actually moving to flash blade s and a lot of that is really not just uh improved throughput. It's really the fact that within five rack units, I can fit 1.2 petabytes right now. And I'm sure next year when the DF MS get bigger, I'll be able to do even more in that,

in that specific rack space. Rack space is definitely at a premium, you know, especially we have a situation where you have, you know, multiple chassis that you need to interconnect and you can't put them at opposite ends of uh of the cage. So, you know, from that standpoint, it's fairly easy to fit everything you need uh within,

you know, a single cabinet or at least an adjacent cabinet and have that work. Yeah. And that actually ties into this thing that we're, we're talking to customers about, which is the, the the your data has gravity, it is some of your weight asset that you have. And when you want to do things like innovate

and iterate quickly, you need to be able to not have to do a full shift of that data, especially when we're talking about market data, the density of it is very frequent. And there's also new ways to correlate that data with potentially other parts of the business or potentially other novel data sets to help your organization kind of do some novel stuff. No, absolutely.

And you know, we're actually seeing um you know, kind of data sets that were traditionally bound to. Um Well, I I should, I should mention, you know, so we support, you know, rates, equities effects, you know, across the plant. Um you know, pretty much every asset class, but we're actually starting to see a lot more data that we already store that different

groups in other kind of like, you know, non traditional, you know, if you take equities data, you know, I have FX folks who are now looking at equities data rates, folks that they're looking at equities data. So seeing a lot more kind of like cross platform stuff and also like a lot more uh correlation and other things that are occurring.

Uh you know, across that market space, either as like leading indicators or, uh, you know, it's part of an Algo. So it's interesting to see that. Yeah, I think one of my first experiences with that was, um, working for an energy trading company and we actually had, uh, on staff, folks that were, um, you know, meteorologists, the professional meteorologists

that were sitting there and helping us, uh, assess when these commodities are being transported across the ocean, how weather might affect the timing of delivering those things. And that was my first kind of novel overlay of data on trading decisions. So it's, it's very interesting to hear that your organization is also doing very novel things across the data sets and the, and the instruments that you,

yeah, we're, you know, in a lot of cases, we're actually creating these data lakes where, you know, pretty much everyone, anyone can go in and, you know, pull what they look what they're looking for. We're using them to, um, you know, Prime Algo. Uh There's, you know, you know, if you build it, they will come and,

you know, kind of like the more data that you have out there, the more data there is to mind, there's more correlations that people can uh discover and it, it just kind of builds on itself. I don't mean to steal the thunder. We're gonna, we got a topic on that coming up. Ok, let's save it. We'll rip a little more Right.

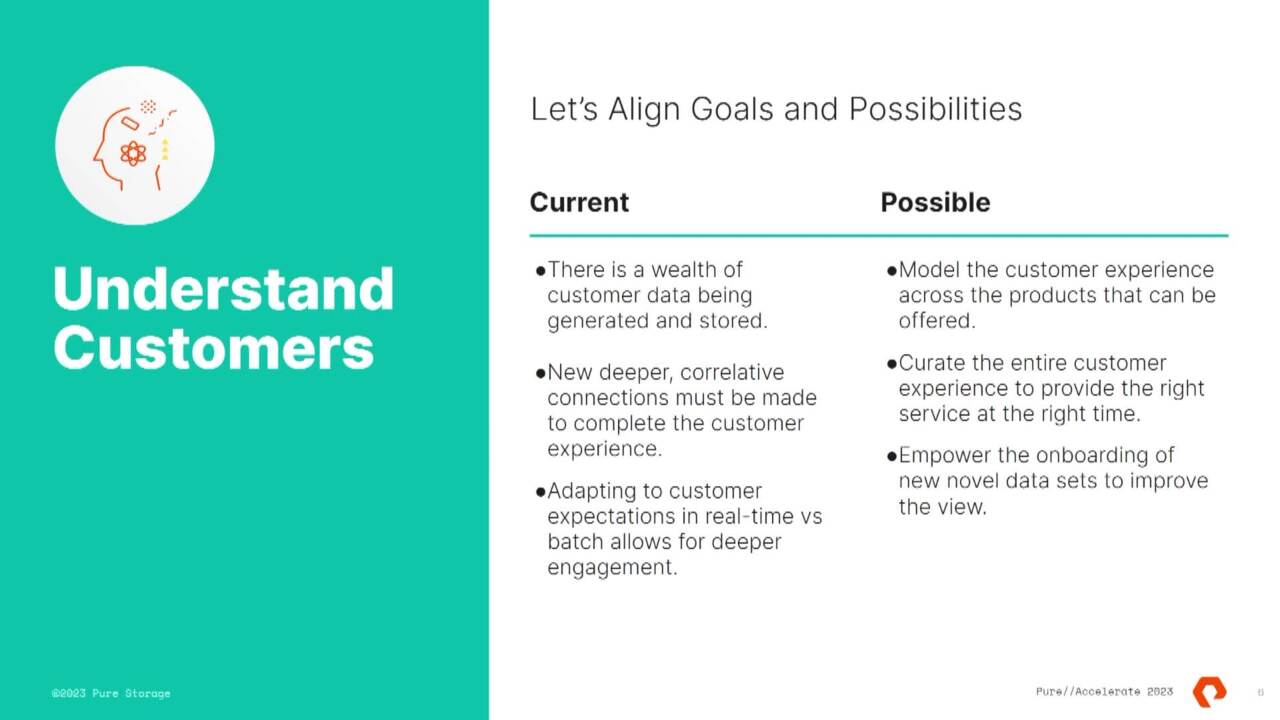

So let's pivot into understanding customers. Right. So this one is an interesting one for me. I mean, anybody who's got kids in the audience, I'm sure it probably would be interesting to you. So, the way I view it is, like, you know, Children and the next generation are coming into the world pretty much with technology at their fingertips since the moment they're born.

And I think that kind of leads this generation to now have different expectations, much higher expectations and sometimes quite honestly wild expectations about things like speed, efficiency and customization of everything, right? So now that can produce challenges, but it can produce like vast opportunities as well, especially when you think about the financial services sector.

And so, you know, vic, you've been in this sector for, you know, over two decades, you've seen a a lot of technology advancement, sorry, don't, don't mean, right. I think the gray hair does it. You've seen a lot of technology advancement. I mean,

what are your thoughts around, you know, A I and ML and how they're really empowering institutions to get to know their customers better and create bespoke offerings for you? Yeah. Well, so interestingly, our financial organizations have quite a wide swath of information on everything that we do on a day to day basis.

My banking institution is my investment institution, it's my transaction institution and, and what we're seeing is this very deep amount of varied data sets that can be applied to understanding a customer, their behaviors where they like to shop, you tie that in with other location based services and analyzing those behaviors and modeling. That means that hey, as an organization,

I can probably look at offering a wide set of different financial instruments and give that to the customer at the right time. And also I think to your point, Ghana, the days of big batch processing or trying to understand where your financial picture is potentially on a monthly basis or a weekly basis. Now, we all want it instantaneously. We want to know exactly where we are and what,

how our strategies are playing out in real time. Yeah, on, on your screen in your face 24 by seven. Yeah, I just, I keep looking at it all the time. You know, we, we talk about, you know, the the sexy stuff is like the, the finance, how do we make more money? How do we write better out?

But we're using it across the board. We're using it for, you know, we produce terabytes and terabytes of log files. We're using it for anomaly detection. Um We run um our own networks, our own switches, we are pulling everything off of that, looking for anomalies, looking for problems trying to be predictive every day.

There is something some different way that we come up with. How do we use a IML to detect this stuff before it happens? Because a lot of the places that we operate in. We don't go into those sites during the week. So we need to be able to survive single point of failure. So we build as much redundancy in as possible.

And if there's stuff that we can get into logically before something turns bad, um, you know, anything that can detect that for us is a bonus. So, are you like doing uh do some of your folks do like uh Carlo simulations and like for anomaly detection kind of stuff, we have stuff like that and we also have machine learning that is literally just going through, you know, millions and millions of lines of log files and

other things just looking for anomalies. Uh You know, sometimes it doesn't even know what it means. It's just like this seems anomalous, this is different, this is different. Maybe you should take a look at that and you know, that that's that, that's the biggest thing. It's just it, it's crunching numbers and it's simplifying stuff.

And I've had some other customers kind of um trying to, to do the anomaly detection as kind of their first fundamental uh analytics A I machine learning model modeling. And that's really, I think like the low hanging fruit and finance industry is doing that risk analysis to understand when there's problems potentially coming up.

So that actually ties into something that I'm seeing across other large global customers is kind of this very big dive into. And so observ is a kind of component of this data pipeline. But the machine learning and A I to detect and maybe potentially take corrective actions down the future is, is, is something that everybody is starting to explore.

Yeah, absolutely. We know we, we measure time in single digit nanoseconds. Um So, uh you know, you just imagine there are, you know, hundreds of thousands millions of trades that are occurring on a daily basis. We are low latency trading, which means people expect it to be low latency. Um So, you know, you need to be able to be out there, basically inspecting every transaction

to know if you're actually hitting those marks that you're supposed to get. And, you know, as markets become more fragmented and, you know, trades become smaller and smaller, um it's a lot to look at. So, um you know, you, you need, you need these deep analytics to be able to find this stuff. And again, you know, detect problems, you never know what the canary in the coal mine is going

to be. Uh But, you know, when you start seeing certain anomalous behavior out there, you know, that's a place to start. Yeah. No, absolutely. Yeah, that's actually probably a great segue into the next one, which is uh generating new ideas, right? So, you know,

as, as uh solutions evolve and as testing evolves in order to gain market share in order to understand your customers better constant flow of new ideas has to be present, right? And even as you guys are riffing on the the whole topic of algorithm. So like generating alpha requires tons of new creative thinking day in day out.

And that really brings tons of data right from various sources, different sizes and sets different types of data. Uh and the way people test and so define their success kind of changes exponentially. With that. we've seen a lot of, you know, institutions out there using solutions like K DB plus on the time series database,

bringing the element of points in time. And what can that point in time do to really change the trajectory of, will it be successful today? Will it be successful in the future? And to do that, the data set just grows exponentially. And Greg you've been saying that all along as we've been sitting here,

right? And so it really starts to bring scalability into focus, right, as a critical element of success. So can you give us some insight as far as you know, what has the bank done to kind of stay ahead of the curve when you think about exponentially growing data sets and you know, keeping that volume in check and being productive with it?

Yeah. No, absolutely. I'll give you an example. There's, you know, there's a, there's a market data feed called opera and you know, two years ago, it was a 10 gig data feed then it went to 40 it's 100 now. Um And you know, if you're going to do back testing, if you're going to write proper out

goals, you need to record every tick, you need every trade, you need everything that is in there and you don't have a choice. So not only are your networks getting bigger, you need to consume that on Prem and you need that small footprint that you can easily expand. And, you know, we're just seeing, you know, almost every year, it's like throwing another 400 terabytes in

throwing another 400 it just, it just keeps growing. It's, it's non stop. And, you know, one of the things that we saw from and I'm not going to mention who our previous storage provider was. But when we went to uh flash blade, uh we saw on the K DB side, we saw 15 to 20% uptick in performance.

And, you know, as I mentioned, we're in the process of uh moving to uh s which is supposed to be another 15 to 20% performance. And, you know, with the better throughput as well. And actually, from an architecture standpoint, it's actually allowing us to take production and development, which we have previously separated and actually just put them together in the same chassis

because there's absolutely no impact. There's actually a benefit because I get the use of the non production blades or that capacity during the production day. So it's actually an improvement. Yeah. And I think what you've done is effectively innovated on making sure that you don't have to do a rest stacking of all of your, of your data sets.

You can, I think with our solutions that provide a very seamless growth, seamless scale and even multigenerational, I mean, we've gone through some, some actually refreshes that were non disruptive. Well, and also too, you know, in your typical environment where you separated environments, I would have a copy of that in production, then I would copy it over to uh non as well.

Uh Just read the data now, don't care. Um And you know, at, at, at the throughput rates, like literally, I would have to put insane network connections in for someone to actually blow out um blow out the bandwidth uh on the storage device. Well, oh sorry, I was just gonna say like what one of the things I think that we're also um

kind of seeing across wider uh the finance industry is, is this when you talk about alpha? And I'm kind of gonna go back to that moment. Um The uh we're seeing uh if everybody, all of a sudden, I'm gonna use a formula. One analogy is, is that OK with everyone? Um So formula. So if everybody has the same uh car and

everybody has invested the hundreds of $50 million into that car and they've squeezed every ounce of potential performance out of that car. Where, where then, you know, do you find like that, that alpha, how if everybody has the same car, where are you finding the alpha? And I think one of the things that I've seen in some customers is that they want,

they want to just really accelerate the discovery and the um the, the, the novel training and, and find new ways of just looking at the data and get that new, a little tiny bit of alpha out. But it really comes down to everybody wants to iterate very, very quickly. And data scientists and the analysts and the folks really want to go through that model as quickly as possible because that's what,

that's what's going to give your company alpha, that's what's going to give your organization that benefit. Yeah. And you know, quite, quite honestly, if you can keep that model simple, if those data sciences don't have to think about where does this stuff sit? Uh Do I need to do something different? It's like,

no, it's there just go use it and we're actually seeing some organizations create these really interesting um like data scientist shared services in order to help that discovery happen really frictionless and not necessarily come to you for, they go to the self service portal where they can not only spin up models in a very safe space but also the data set that they're allowed to see and kind of work on.

And train on. Yeah, because for, for us like the, the data that we capture is data that we spent a lot of money to subscribe to in the first place. Um So, you know, with that subscription model, I'm entitled to keep it. And you know, that is, you know, incredibly valuable. There's actually people out there on the street

that will sell you, you know, petabytes of, you know, historical data. Yeah. Weren't you involved in that options? It Yeah, absolutely. And is in the audience and uh now you're, you're forever in the recording. Exactly. Immortalized in the Victor.

I did wanna say though, you know, I, I think quite honestly, Formula One is the coolest thing that Pier does and the fact that this is only the first time I've heard this come up in uh in one of these sessions uh makes me a little sad, brings to mind that I'm, I'm gonna try to go to the My Touchtone because I'm trying to uh be able to present that. Hopefully one of our Formula One events to our

customer shame. Uh Yeah, I mean, Shoe Mo Days. Come on. Who's been, who, who's watched Formula One since Shoemaker? All right. All right. Great. And who's, who's uh who's been watching Formula One since the Netflix uh documentary?

All right. Yeah, me too. All right. Good stuff. Awesome. So let's uh we'll dive in certainly, you know, almost saving the best for last, right. Everyone's favorite topic is increasing return on investments, right? So when you think about it,

everything we've been talking about, generation of ideas, testing, et cetera. Um you know, one of the things where there's often some give and take is the quality and the consistency of the environments that support all this people think about. Oh, yeah, generate alpha, right? What they fail to realize there's like hundreds,

if not thousands of people globally like sprinting day in and day out trying to make that happen, right? So if they're, if they're hindered by their environment in any way, shape or form like that, that really kind of limits the potential, right? And so uh vic this one's going to you. So when you are thinking about kind of A I and ML and the underlying infrastructure that

supports it. So like whether that's your, your data platform, your technology platform, any any moments in your career that really stand out maybe a specific project or a point in time where technology that really helped or really hindered an effort that you were trying to make or a goal you were trying to achieve. Yeah. So um uh I'm gonna, I gonna bring this up

pretty high level. Um So when, when I had a customer that basically had invested a great deal in very static infrastructure, um and it it really was static and when they started to try to do some more innovative models or when they started to push the envelope on their, on their static infrastructure.

Um They, they, they started to test with something that was not going to be anything like production. Um And so uh one thing that what we really got to with them was, well, you know, you have to really look at your initial pilot phase and, and we all know that there's pilot phases of a IML happening in buckets in large organ organizations.

Um And sometimes uh because the lines of businesses don't necessarily cross, cross talk with each other, they don't know that this like innovation is happening, but they start with this unit. That's not necessarily a really very scalable unit. It has a lot of tech debt, it's very static. Um It's not like cloud native in the sense of its application deployment strategy.

Um So, so what some most customers are getting to is this really disciplined approach to that pilot? So that unit that I test on has to be compos easy to use automat. And then when it's time to grow to scale of like of your, your size or other sizes, that unit is now a multiplication unit that you can use.

So there has to be discipline in that initial pilot phase, as much discipline as you would have when you go into a production phase, right? Because if you're going production now you now it's your customers data, it's your business. Um And what we're seeing is this like weird shift from doing pilots that were not

necessarily built for scale and then now doing it the right way with the right unit that can scale out. Yeah. Absolutely. And, you know, that's one of the reasons we're looking to, to, you know, combine pro and non product for the simple fact that you're using the same vehicle. Uh, it's whatever, you know, if you're doing something bad there,

we're gonna know about that too. Um You know, because, you know, all of this stuff always starts with something like that. It's um oh, you know, it worked fine in depth and then you roll to production and suddenly you're blowing up the network. Yeah, use it an anger a little bit and see, see how the results vary, you know, instead of like two symbols,

do 20,000 symbols and suddenly, you know, that, that changes the game. Absolutely. No, that, that's outstanding. So, I mean, you know, this one is, is really for you. I just want to kind of sum up some of the things that we've been talking about at, at a high level here. So allow you to for a moment.

Yeah. So, um you know, I think overall a IML is a constant uh evolving beast. Uh There's new research happening pretty regularly. I sit and look at thesis papers and read through some new innovative approaches to doing any particular task in the data pipeline for a IML, it's complex and it's ever evolving. So if you have a very static approach to it,

you're not going to be able to meet that demand or even know what the next new thing is. I mean, I think I was pretty surprised when everybody started talking LM. And I was like, oh OK. So now it's the topic of or natural language processing or um it's become very house household. So uh because it's so complex and,

and there's new tools available all the time, you definitely wanna be able to apply those tools to your, to your goals. Um Discover is an iteration is the key. That's how customers are really getting value out of their A IML initiatives and allowing their data scientists, these high value folks to really go and do their core competence, which is like go do your data scientist stuff and giving them an approach to to,

to do that on demand is something that definitely allows that. And then last but not least agile agility is going to be an important factor. You might have to rest, rest stack your tools. So if your tools need to be rest stacked, well, how about not having to do it for your underlying data set? And especially when we talk about the data sets in modern age,

tens of petabytes, potentially hundreds of petabytes of information. That's a heavy lift, that's a year, multi year effort. So let's let's try to take away that rest stacking. Let's try to take away the, the impact of that to the business and uh provide you agility and your lines of businesses. Well, the slide you had before return on

investment, the one thing we didn't talk about was investment. You know, in terms of pricing, you know, I'm seeing pricing now for flash blade, flash blade s that is competing with local spinning disc. You know, I can do stuff cheaper on prem than I can do in the cloud with just the base, uh, the, the base pricing that that's out there.

That's pretty common. And yeah, so you know, that, that's, that, that's an exciting thing too because it never used to be that way. And you know, the, the prices just keep coming in and coming in. Yeah, and we're seeing some, some repatriation too. Uh, folks that are doing high demand training on big data sets that require a lot of

performance. Um, they, they, they're looking at it critically and saying, ok, well, maybe we can do this back on prep and there's some repatriation happening. Obviously, it's kind of a two way street. It's gonna land somewhere in the hybrid world, I think. Uh, but, uh, yeah, but you know, I, I don't want to diminish cloud in any way,

but when you start moving enormous data sets back and forth, now you're talking about ingress, egress fees, you need huge pipes to do that. And you know, it, it starts to kind of like diminish the returns of the cloud when you're basically trying to create your on prem out in the cloud. Yeah, exactly. Sure.

Well, that's great guys. I mean, we've, uh we've riffed here just about, I'd say, you know, 30 plus minutes, we've gained a ton of insight across a variety of really interesting topics. So, Greg, first and foremost, thank you for being here, not only as an attendee but as a speaker for Accelerate, we, we really appreciate that.

Um You know, it's, it's great just to hear, you know, the way you guys are utilizing both A IML and in conjunction with pure solutions uh to really get ahead in the market, it's outstanding.

In this session, we will discuss how analytics, AI and ML are being used in the finance industry to drive faster insight and value in supporting investment strategies, electronic transactions workflows, and line of business customer demand. The infrastructure required to support modern analytics and AI have specific needs that need to be addressed including places of friction that must be eliminated for optimal efficiency, lowest TCO, and maximum agility. Representatives from JPMorgan Chase will join this conversation with our data architects to share experiences and learnings that can be applied to other industries.

A Leader in Innovation

In a breakout year for AI, Everpure has been recognized by AI Breakthrough Awards as the Best AI Solution for Big Data.

We Also Recommend...

Personalize for Me