Dismiss

Innovations

Everpure Simplifies Enterprise AI with Evergreen//One for AI and Data Stream Beta

Accelerate the transition from pilot to production with benchmark-proven performance, automated data pipelines, and a flexible consumption model.

Dismiss

June 16-18, Las Vegas

Pure Accelerate 2026

Discover how to unlock the true value of your data.

Dismiss

Innovation

A platform built for AI

Unified, automated, and ready to turn data into intelligence.

21:21 Video

Better Science: HW & SW Co-Design

See how Pure has taken this approach to our entire portfolio and achieved unparalleled levels of efficiency for power, density, performance and more.

Click to View Transcript

When we launched flash blade back in 2016, I had the great privilege of spending the next few years out meeting hundreds of customers all around the world. And one of my favorite discussions was when customers would play stump the chump where I get to be the chump. Everybody wants to ask a comment set of questions. And one of my favorites is, hey,

everybody has access to flash. So what makes pure special or might slightly even more favored version of that? What in the world are you guys doing building custom hardware? Are you nuts? So by way of introduction, I'm brian gold. I was part of the founding engineering team on flash blade.

I've been here at pier nine years now. I've worked across hardware engineering, software engineering, product management solutions, customer support. Just about everything. It's been amazing and very privileged ride. And today what I wanted to do was go back to basics through fundamentals. First principles.

Let's talk about how we think about flash and why we build this hardware the way we do all the way from the very basics. And to do that, we're gonna have to dive deep. We'll have a little fun as we go. But I mean deep. So let's talk about device physics, right.

This is a transistor. If you haven't seen a transistor before. Don't worry. It's actually not complicated. It's just a switch. What we want to do is be able to control the flow of current from this left point to the right point at the beginning, no currents flowing the switches off.

If we apply a voltage across the top and bottom, that voltage is high enough. This is a this is a field effect transistor and that voltage across the top and bottom creates an electromagnetic field which causes a channel to form and electrons can flow right current. The switches now on if that voltage is above a certain threshold, this is the foundation of modern semiconductor electronics.

Your laptop has hundreds of billions of these between the compute and storage elements sitting in there Back in the 1960s as researchers were really developing this technology and starting to learn more about its properties. They tried an interesting experiment. What happens if we jack that voltage way up way above the threshold?

Well, there's a phenomenon from quantum mechanics that kicks in at that point. It's called Quantum tunneling where enough energy is provided to some of those electrons. That probabilistic Lee they will tunnel through this incredibly thin dielectric. It's basically a film of glass, it's about 75 atoms thick. All right, once they're there, they're trapped.

You can cut the whole power those electrons will stay while they're there. They'll stay for years while they're there, they change the electromagnetic field and you can observe this right? Because now it takes a different threshold voltage to cause that switch to turn on. So you can observe this effect. If we take thousands of these transistors and we plot a distribution of what that threshold

voltage looks like when there's charge trapped or when there isn't charged trapped. We can see two distinct values. We've stored a bit using quantum mechanics and we can read it out and we can keep it for years right. If we can tell what a lot of electrons look like and no electrons look like. Well if we can tell some electrons or a few

electrons trapped in that cell, we can store a couple of bits of information and we know this is slc flash, mlc flash, T L C Q L C and so forth. Right. So let's just pause and appreciate. You probably didn't know you were walking up for a quantum mechanics lesson. Let's have a little fun. Right? Using a single device like this that is

absolutely miniscule in size. We are storing multiple bits of information, making it more and more dense with every generation is pretty incredible. Let's go further let's let's talk about some pretty advanced manufacturing. If we take that transistor and we turn it on its side we stretch it out, stretch the materials out.

We can vertically stack these cells in layers or what's often called three D nand in the industry were now getting production parts that have well over 100 layers. Further increasing the density. We take these strings. These columns of really dense cells and we packed him as far as the eye can see if the eye could see inside the width of a human hair,

at least incredible feats of engineering or take these manufacture them on the most advanced semiconductor processes ever created and we'll stack them up further to further increase density. These are wire bonds that take stacks of chips. We put those into packages, go on circuit boards, things you might recognize stuff. Data centers full of them.

And that's how we get petabytes to exabytes in scale. Pretty amazing when you stop and think about. A petabyte of storage is eight quadrillion bits all stored with quantum mechanics and device physics. And some of the most advanced manufacturing technologies ever created. Right? Sometimes we lose sight of all the science is

sort of going on under the covers and I don't know about you but you know, I'm not a physicist by training but I think this is just really freaking cool. Except a lot of us know it's not quite perfect. It's decidedly not awesome when you actually look at what's happening under the covers. What do we mean? Well, there's no free lunch.

All this extra information density. This bit density. The tricks were playing. It comes at a cost. I told you, hey, we have these nice distributions. This is a Q. L. C voltage distribution of how we can determine the four different values through 16 different levels.

It doesn't actually look like this in practice. It looks more like this. The levels are sort of overlapping. So we have to apply some of the most sophisticated signal processing ever created, basically like communicating with a mars rover, but at gigabytes per second just to recover the values reliable. Except this still isn't quite accurate. This isn't really how it works.

The values those distributions they shift over time and almost every block, every plane can be a little different. So we have to track those those changes so we can more reliably recover the data except it's still not quite like that. It degrades over time with usage and these are the realities. And we know that, you know, nan ever since we first started working with it,

it has endurance. You know, questions are can be difficult to read at times. The thing we have to keep in mind is every generation gets worse. All the things we're doing to improve the economics and the density, they come at a cost, there's no free lunch.

And so when we think about future systems designs and pure and you know, in the industry, we have to ask, how are we gonna solve these challenges? And of course, there's a cohort in our industry that are just waiting to tell us, you know, we told you so and that the past, present and future of storage is in fact hard drives. Well, I don't know if, you know anything about

sort of the magnetic storage technology and what's happening in the hard drive landscape. But if there's one bit of technology with actually harder physics problems right now, it's it's hard drives. They're trying to strap either lasers or microwaves to the head to heat up the track that's spinning underneath to further increase the the information information density.

It'll work at least in a lab if it gets to volume production, guess what? It's still a hard drive. It's still slow, unreliable. Probably consumes a little more power because you're heating stuff up, right? We gotta do better than this.

So if if all flash really is our future, let's take all the complexity of the underlying now simplify this down as best we can. There are three fundamental problems we know we have to solve, right? We have an endurance challenge. We have to solve for what effectively is write amplification.

How many extra rights do we do to the flash as a function of the user requested rights that came in every generation as we increase the density, the programs and erases the amount of time it takes to add those extra levels into that distribution. It takes longer. That may not affect in a large scale system that may not affect the average latency. It definitely affects the tail leads.

I'll walk you through that and last but not least if we saw these first two problems by adding extra layers of cashing and buffering and things that essentially increased hardware resources. Well then we quite possibly walked back the efficiencies we've gained by moving to that next generation media. So we've got to tackle all of these together and comprehensively.

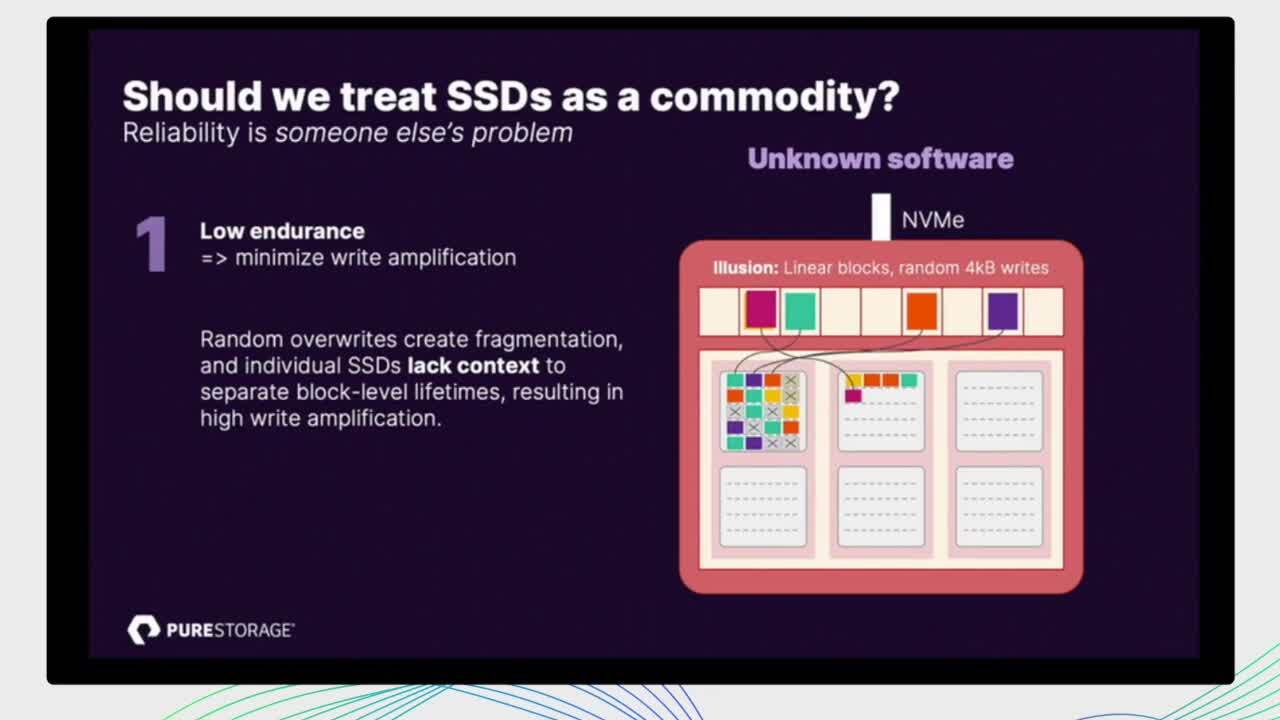

So let's take a look at basically how this is solved in the rest of the industry. Right? Where the rest of the industry is taking the classic age old approach of Well, let's just make it somebody else's problem. Right. We're gonna get these S. S. D. S, their commodity produced at massive scale. We'll let coaxial will let Micron will let you

know, SK Hynix and other manufacturers. They'll worry about the details of the nans. We'll just buy this SSD use envy me. It'll be great. Right? And the, you know, systems vendors want to focus on that software, it's a logical logical approach, but let's see where it breaks down, Right? It's built on this abstraction that actually

makes the the media underneath look like a traditional hard drive. Yes, it's envy me. So the protocol itself is efficient but we're essentially going to add a layer of illusion that allows software to do random in place four kilobytes sector overwrites. This is not how flash behaves under the covers. But it's very convenient if you're writing

software. So let's look at each of our three problems in turn, right, let's let's look at this right amplification challenge. So in flash we have to look beyond this illusion. Right? So the illusion is here creating this as we know the flash translation layer, these remapping of these sector addresses.

We're gonna place inside of an SSD will decide to place those locations linear early in an append only fashion at the flash because that's how the flash has to be written. It has to be, you know, a block. One of these white rectangles here has to be erased in full and then you write sequentially to it. The problem here is that over time we end up overwriting values the logical addresses.

As those overrides come in, we have to mark some of those prior locations. Those sectors is no longer needed their garbage as we call them. And at some point we've got enough garbage built up in a block. We need free space will garbage collect. But as the cartoon shows it's not all garbage. So we have to copy out anything that doesn't have a little X on it.

We gotta track all this. We gotta copy it out and rewrite it. That rewriting is write amplification. We just ate into our endurance budget. That is so precious to us. And the problem in an SSD is because we're hiding behind this illusion layer, we have no context. There's the SSD manufacturer does an incredible

job trying to optimize their placement algorithms, but they know nothing of the software above. Right. Second problem. Alright. When we have any race of a block ongoing, their blocks are formed into essentially die and you can only have one outstanding operation to a die at a time.

So if I'm Erasing this block, I cannot read another completely, you know, logically independent piece of data that is in the same guy. And so that read of a maybe very critical to your user workload. It's stuck behind that erase and as these erase times get longer and longer you actually start getting tail agencies that look like hard drives all over again Can be easily 10s of

milliseconds. And again the problem is that illusion layer, we know nothing about what's happening and when it's happening and we have no control as software developers above that block interface we have no control over how to place and schedule these operations. And finally S. S. D. S. Are massively over provisioned in both the

flash and the deer and required to create that illusion layer it's not free. So a typical rule of thumb pretty much any SSD you're going to find is a 1000 to 1 ratio. So if you have a one terabyte SSD it's got about a gigabyte of RAM inside the drive to maintain those mapping tables do the random read performance that you would expect and a gigabyte doesn't sound so bad in a desktop machine.

It's not a huge deal. Keep in mind you build a 10 petabyte storage system that's 10 terabytes of RAM inside the S. S. D. S. And if you've looked recently but the RAM is not cheap, right? So you just took the economics of maybe Q. L. C. Media. And you made it much worse by strapping a huge

amount of D RAM that is not usable for anything other than preserving this illusion that makes it a little convenient to write software for the storage vendor and of course to mitigate the scheduling problems of garbage collection. The drives keep essentially a buffer of over provisioned blocks That avoid performance falling off a cliff when suddenly there's no free space and it has to go do garbage

collection to accept the next right that comes in. So the drives are constantly trying to create this free space and maintain around 20% unusable to any software above it. Hidden buffer just to try to mitigate the performance problems. We have to do better than this and I want to be clear S.

S. D. S are marvels of modern engineering, right? I would never want a laptop with anything other than a state of the art SSD in it. And so this isn't a knock against S. S. D. S in any way except for when we build really large scale storage systems out of them where they're just inappropriate at this point in

time to get the best economics at the best scale, the best energy efficiency we have to go further. So let's look at how we do this inside a pure with direct flash. So again, same three problems. Right? We have this endurance challenge from the media all the way from the physics up.

How do we deal with that? Well, direct flash allows us to control the physical placement, our software impurity. This is flash array flash blade. We can see all the way down into the dye, the blocks, the lungs, the planes pages, every piece of it. And we can place data and metadata based on what we know at a system level.

And we have a lot more context about when data is written together. When it's likely to die together and become garbage or get overwritten and we take these patterns and that allows us to co locate data that's going to have a similar expected lifetime. And so when a block is mostly garbage in our systems it's likely all garbage and we have no right amplification as a result when we're

ready to erase this, it's essentially free from a write amplification point of view. This is more than theory and you know, power point pictures. It's very real in practice. And so we monitor annual return rates very carefully for the the D. F. M. S. The modules have been getting and over time you can see the trajectory we've been on as a company.

You know, the annual return rate so lower is better. We want to get fewer broken parts back as an industry. The typical average for an SSD shown in in blue. We were already doing better than that in the original Flasher A. M. F A 400 series if you remember back that far just by writing software and purity,

that was laying out data the way flash wanted to behave and you can see we made improvements but the advantage we got from moving to direct flesh and that visibility all the way into the nand is profound. This is arguably the key graph at the company for how we can do Q. L. C. Right?

And we've talked about this a little bit but we haven't talked about it nearly enough over the last few years. This is what happened, you know, this is what we did to enable flash or A. C. Is what you're seeing in the new flash blade. S but of course it doesn't stop there. We don't just Build better algorithms and ship them and be done. We can actually take a look at the telemetry.

We get back through pure one observe real world access patterns and further optimize those co location of data and metadata and optimize the lifetime prediction. And we've done that. So it's actually gotten better over time as we've had this convenient feedback loop. So a second problem, these tail agencies that are theoretically getting worse or the

programming race times are getting worse. Well, let me tell you, there's there's sort of no great magic trick. If you do fewer rights in the first place, you have fewer di conflicts. So, you know, sort of almost cheating by claiming credit because we already did the work in the reducing write amplification but we can go further because we know exactly where those conflicts are going to occur.

We can proactively rebuild if we want to really minimize latency for a user facing operation. We do this in flattery, we've done it for a very long time to rebuild from system parody because we know exactly when that conflict is going to happen, so we don't have to wait ceo it's taking a while, let's now try this, increased traffic. Maybe it comes back.

We know exactly when there's that conflict is going to occur. And so we've been able to really push tail agencies down to a minimum. It's still a very hard problem. There's no getting around the physics underneath, but we use every available tool at the system level to try to mitigate that.

And finally, in the most boring picture I have in this entire presentation, there is no other D RAM for their, for creating an illusion. There is the RAM in the in the direct flash module, its size for performance. If we want to have a certain number of outstanding IOS, we want to have an NV RAM capability that we use in the flash array,

excel in the flash blade s that's integrated into the D F. M. There's a bit of deer in there, but it's sized for performance and that's critical of how we think about building, really capacity centric systems, we want these expensive resources like the RAM to scale with the performance, we offer, allowing us to have a huge variety of configurations for customers

to choose from. And there's no over provisioning at the drive level. We have over provisioning at the system level but we're not stacking these and that's really the problem. We see in a lot of our competing systems that are built on commodity S. S. D. S is you have the over provisioning in the

drive and you also have over provisioning at the system level because the two are basically built without any cooperation and really large scale systems, we've got to do better and that's exactly what we're doing. You can kind of see this if you take all these things and play out where we are and where we're going. We are now able to scale DFM capacities

basically as a function of what we can get from the semiconductor vendors and that's enabling us to scale, that's how you see a 48 terabyte dFm today. But if you think that's impressive, I mean we've got some big plans in the works and so we are accelerating our our leverage over even the commodity S S. D. S and for sure, over the guys strapping lasers

onto the head of the the magnetic media so all of this gets rolled up in a simplified picture when we come and talk to you as customers or partners about, you know what direct flash is and the components of software and the hardware but there's a lot of science happening kind of under the covers things that, you know, we didn't invent here, but we've certainly built upon and tried to educate

ourselves as best we can to pull those levers on the airplane, you know, as to use causes analogy. The thing is, we've been doing this for a long time. We've got a 10 year head start, we're really proud. I've been here nine years. I'm as excited now about where we are and where

we're going at any point in that in that time period. And you now see this across the entire product line of all flash appliances. So all the flash array models and the new flash played s where we've really made a big push on doubling down on the co design aspects. Right? So to get the increased capacity to really take on disk to go further in performance,

right? Our goals for Flash Blade s were double basically every speck over the prior generation, double the performance, double the density and really importantly double the power efficiency as that is very important to many of us. And the only way we're gonna do that is through this Co design.

The only way we can build a system like this is by really understanding the properties of the media and working our way up from there to build a system and the thing is direct this better science, this theme you hear today. And some of the keynotes, Direct Flash is just the beginning. We're really passionate inside of our R and D organization about some very deep work that we've done across our systems.

Flash array flash blade. It's happening import works. It's happening in cloud block store and we're really eager to talk a little bit more openly transparently with the public about what we're up to as we think we have a huge differentiation in the market and it really is accelerating our trajectory. Yeah.

Thank you all very much. And I believe the way this is supposed to work is we're going to hang out and talk questions socially afterwards. But thanks everybody for coming. I hope you enjoyed the show today and be safe.

Alan Kay once said, “The people who are really serious about software should make their own hardware.” This is true today more than ever for the most successful technology organizations, from the world's favorite smartphone manufacturer to the most popular electric vehicle company. These companies understand that efficiency is essential to building great products and it requires co-innovation of hardware and software.

See how Pure has taken this approach to our entire portfolio and achieved unparalleled levels of efficiency for power, density, performance and more.

We Also Recommend...

Personalize for Me