Choose Your Region

Choose Your Region

- Australia (English)

- Brasil (Português)

- China (简体中文)

- Deutschland (Deutsch)

- España (Español)

- France (Français)

- Hong Kong (English)

- India (English)

- Italia (Italiano)

- Latinoamérica (Español)

- Nederland (Nederlands)

- Singapore (English)

- Türkiye (Türkçe)

- United Kingdom (English)

- United States (English)

- 台灣 (繁體中文)

- 日本 (日本語)

- 대한민국 (한국어)

Dismiss

Innovations

Everpure Simplifies Enterprise AI with Evergreen//One for AI and Data Stream Beta

Accelerate the transition from pilot to production with benchmark-proven performance, automated data pipelines, and a flexible consumption model.

Dismiss

June 16-18, Las Vegas

Pure Accelerate 2026

Discover how to unlock the true value of your data.

Dismiss

Innovation

A platform built for AI

Unified, automated, and ready to turn data into intelligence.

Systems

Subscriptions

Software

Systems

Subscriptions

Software

Our Products

Systems

FlashArray//XL™

Gain enterprise-grade performance without enterprise complexity with Pure’s NVMe storage array solution.

FlashArray//X™

From entry-level to enterprise applications, accelerate business results with your most critical data.

FlashArray//C™

Enterprise-grade QLC delivers where hybrid can’t. Get NVMe performance, hyper-consolidation, and simplified management.

FlashArray//E™

The all-flash advantage at disk economics, targeting 750TB-6PB.

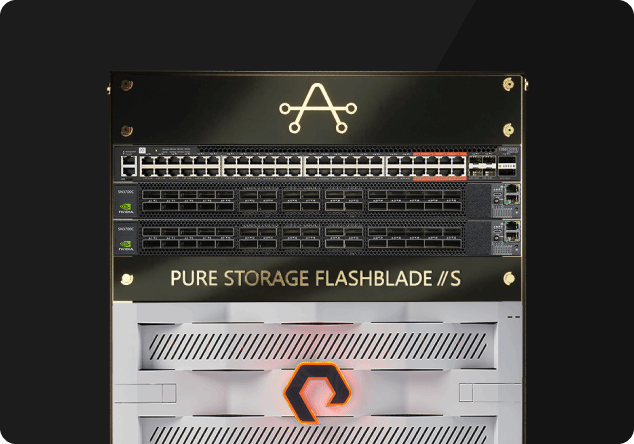

FlashBlade//S®

The only scale-out storage platform that efficiently powers your modern unstructured data needs—delivering cutting-edge capabilities without growing complexity.

FlashStack®

FlashStack combines compute, network, and storage to provide a modern infrastructure platform.

AIRI//S™

Simplify AI deployment and scale quickly and easily, enabling your data teams to focus on delivering insights, instead of managing IT.

Introducing the Everpure//E Family

Economical. Efficient. Everlasting. All-flash at the cost of disk—without the costs of disk.

Subscriptions

Evergreen//One™

Shift to a consumption-based storage model with Evergreen//One—a fully flexible enterprise-grade storage subscription service.

Evergreen//Flex™

Get a subscription that provides flexibility to respond to changes in demand and use. Increase your storage agility and maximize ROI on capacity usage with lower upfront costs.

Evergreen//Forever™

Gain the advantage of Evergreen//Forever—Pure’s subscription model that delivers seamless, rapid upgrades and expansion, without disruption.

Software

Pure1®

Experience self-driving storage with full-stack, AI-powered data-storage management and monitoring.

Purity Operating Environment

Make every bit of your organization's data available—in the most insightful way possible—so you can take informed action.

Pure Fusion™

Bring the simplicity of the cloud operating model anywhere with on-demand consumption and back-end provisioning.

Portworx® by Everpure

Portworx® powers cloud-native apps with the most complete Kubernetes Data Services.

Pure Cloud Block Store™

Benefit from Pure Cloud Block Store with seamless data mobility, resilience, and a consistent experience—no matter where your data lives.

Everpure Protect Service // DRaaS

Get a simple, cost effective, on-demand, disaster recovery as a service (DRaaS) that offers native public cloud protection for VMware.

What is an all-flash array?

An all-flash array (AFA) is a storage infrastructure that contains flash memory drives instead of spinning-disk drives. All-flash storage is also referred to as a solid-state array (SSA). AFAs and SSAs offer speed, performance, and agility for your business applications.

Where do all-flash arrays fit into the world of data storage?

For much of modern history, data centers have been dominated by hard-disk drive (HDD) technologies configured as network-attached storage (NAS) and/or storage area networks (SANs).

With the advent of solid-state drives (SSDs), data storage companies started offering expensive high-performance flash memory solutions for Tier 0 and Tier 1 data applications. The lack of spinning disks means solid-state drives are orders of magnitude faster than traditional technologies.

Thanks to Moore’s Law, flash storage continued to become more cost-effective, making the all-flash versions of NAS devices and SANs economically feasible.

What are the benefits of bringing all-flash memory to the data center?

As you might guess, simply switching out your HDDs with SSDs is enough to increase the speed and performance of your NAS and SAN solutions. The benefits of an all-flash array are the same as the benefits of flash memory itself.

All-flash arrays vs. hybrid storage arrays vs. HDD arrays

Generally speaking, all-flash arrays are the fastest and most performant. These are followed by hybrid arrays which use a combination of flash and HDD within the same storage array. While not as fast as all-flash arrays, cheaper HDD racks may be used to add capacity when performance isn’t needed. Traditional arrays are the slowest.

Product and program availability varies by region.

Contact sales for additional information.

Personalize for Me

Personalize your Everpure experience

Select a challenge, or skip and build your own use case.

Future-proof virtualization strategies

Storage options for all your needs

Enable AI projects at any scale

High-performance storage for data pipelines, training, and inferencing

Protect against data loss

Cyber resilience solutions that defend your data

Reduce cost of cloud operations

Cost-efficient storage for Azure, AWS, and private clouds

Accelerate applications and database performance

Low-latency storage for application performance

Reduce data center power and space usage

Resource efficient storage to improve data center utilization

Confirm your outcome priorities

Your scenario prioritizes the selected outcomes. You can modify or choose next to confirm.

Primary

Reduce My Storage Costs

Lower hardware and operational spend.

Primary

Strengthen Cyber Resilience

Detect, protect against, and recover from ransomware.

Primary

Simplify Governance and Compliance

Easy-to-use policy rules, settings, and templates.

Primary

Deliver Workflow Automation

Eliminate error-prone manual tasks.

Primary

Use Less Power and Space

Smaller footprint, lower power consumption.

Primary

Boost Performance and Scale

Predictability and low latency at any size.

Start Over

Select an outcome priority

Select an outcome priority

Next

What’s your role and industry?

We've inferred your role based on your scenario. Modify or confirm and select your industry.

Select your industry

Financial services

Government

Healthcare

Education

Telecommunications

Automotive

Hyperscaler

Electronic design automation

Retail

Service provider

Transportation

Which team are you on?

Technical leadership team

Defines the strategy and the decision making process

Infrastructure and Ops team

Manages IT infrastructure operations and the technical evaluations

Business leadership team

Responsible for achieving business outcomes

Security team

Owns the policies for security, incident management, and recovery

Application team

Owns the business applications and application SLAs

Back

Select an industry

Select a team

Select a team

Select an industry

Select a team

Select a team

Next

Describe your ideal environment

Tell us about your infrastructure and workload needs. We chose a few based on your scenario.

Select your preferred deployment

Hosted

Dedicated off-prem

On-prem

Your data center + edge

Public cloud

Public cloud only

Hybrid

Mix of on-prem and cloud

Select the workloads you need

Databases

Oracle, SQL Server, SAP HANA, open-source

Key benefits:

- Instant, space-efficient snapshots

- Near-zero-RPO protection and rapid restore

- Consistent, low-latency performance

AI/ML and analytics

Training, inference, data lakes, HPC

Key benefits:

- Predictable throughput for faster training and ingest

- One data layer for pipelines from ingest to serve

- Optimized GPU utilization and scale

Data protection and recovery

Backups, disaster recovery, and ransomware-safe restore

Key benefits:

- Immutable snapshots and isolated recovery points

- Clean, rapid restore with SafeMode™

- Detection and policy-driven response

Containers and Kubernetes

Kubernetes, containers, microservices

Key benefits:

- Reliable, persistent volumes for stateful apps

- Fast, space-efficient clones for CI/CD

- Multi-cloud portability and consistent ops

Cloud

AWS, Azure

Key benefits:

- Consistent data services across clouds

- Simple mobility for apps and datasets

- Flexible, pay-as-you-use economics

Virtualization

VMs, vSphere, VCF, vSAN replacement

Key benefits:

- Higher VM density with predictable latency

- Non-disruptive, always-on upgrades

- Fast ransomware recovery with SafeMode™

Data storage

Block, file, and object

Key benefits:

- Consolidate workloads on one platform

- Unified services, policy, and governance

- Eliminate silos and redundant copies

What other vendors are you considering or using?

Back

Select a deployment

Select a workload

Select a workload

Select a deployment

Select a workload

Select a workload

Finish

Thinking...